Artificial intelligence policy decided by professors’ syllabi at Mason.

BY ERICA MUNISAR, EDITOR-IN-CHIEF

In Fall 2022, OpenAI launched ChatGPT which became increasingly popular among college students. However, the widespread use of AI-generated writing raises concerns about the academic integrity of students using such technology to complete assignments.

The George Mason University Honor Code does not explicitly address the use of Artificial Intelligence, but it does define cheating as “failing to adhere to requirements (verbal and written) established by the professor of the course.” However, the Department Of Computer Science Honor Code, which must be more specific, bans the use of AI generated code with exceptions to explicit permission.

Currently at Mason, the use of AI in the classroom is mostly up to professors who may opt to label it as a violation of the honor code in their syllabus.

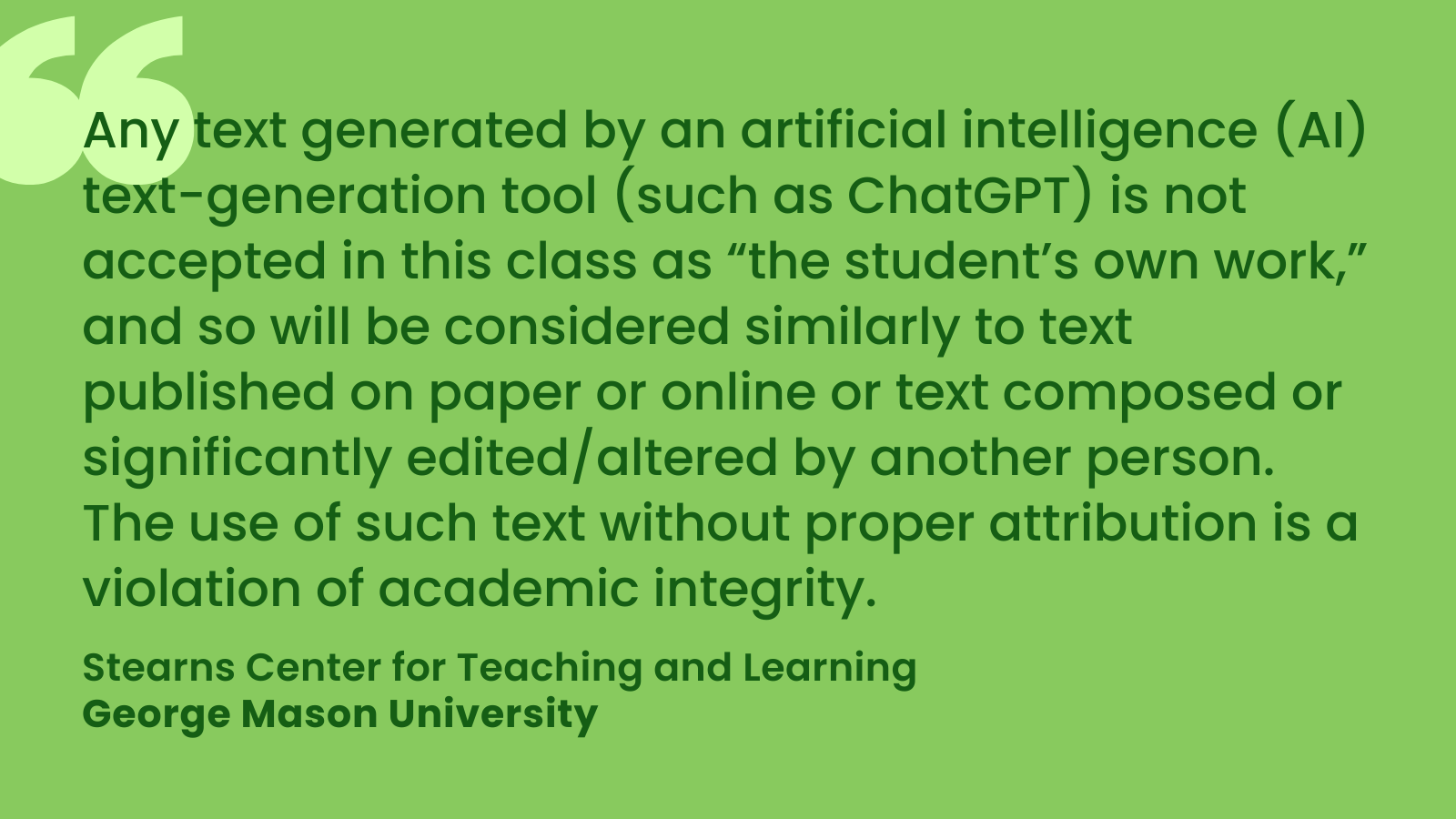

In his COMM305 syllabus, Senior Instructor Lance E. Schmeidler uses suggested syllabus policy language from the Stearns Center which may prohibit the use of AI. The Center gives recommendations to combat AI dependence such as clear communication of standards with students, adaptation of assignments as well as new grading criteria and emphasis on citations.

Schmeidler emphasizes that the use of ChatGPT should not be met with concern, and may even be encouraged in some courses. “The technology [AI] is still in its nascent stages and while intriguing, it is not yet a significant threat to the integrity of the learning experience. That said, unlike other forms of plagiarism that are less detectable, faculty have the advantage of being early-adopters and guiding students toward effective use of AI tools in their courses. We consider many negative consequences of adoption in the classroom, but over time education will evolve to include the technologies and elevate teaching and learning.” said Schmeidler.

“Some past examples of controversial adoptions include the calculator, PowerPoint, LMS’s, use of smart devices and apps, etc. None of these technologies were available for use in my college classes, though my own school age children have been using them in their classes since before COVID.”

Schmeidler shared how professors detect AI generated work. “There are several ways AI usage can be detected. First, faculty develop a sense of student voice for the assignments in their courses. When a passage or paper is submitted that is out of character for the expected voice it would naturally raise concern that the author might not have been the student. Next, for a paper assignment submitted through Blackboard, instructors have the option to submit the document for a SafeAssign review. This would bring to light the copying of any previously submitted work, whether drafted by an AI or other author.”

“Finally, generative AI tools, like OpenAI’s ChatGPT also have programs that can detect if written material was the output of the AI system.” said Schmeidler. “Generally, the accuracy of these output detectors is very high (99% or higher), and might be more accurate at detecting AI generated script than programs like Safe Assign are at determining plagiarized work.”

George Mason currently uses SafeAssign through Blackboard, which does not cite abilities to detect AI.

Virginia universities have responded positively to the use of ChatGPT in the classroom, considering it a valuable tool to assist students while taking steps to prevent academic dishonesty through in-person assignments, proctoring, and other measures.

Universities such as James Madison University currently use AI detection software for submitted work such as Respondus Monitor for proctored exams. Institutions such as Old Dominion University released a FAQ page encouraging responsible AI usage and shared ten AI detector resources to combat academic dishonesty.

In a large panel at Virginia Commonwealth University, they welcomed the use of AI after citing that AI detection software is not accurate enough for reliable use. “The panelists were unanimous that faculty should not ban the use of the technology in the classroom out of fear of students cheating. Students have access to the technology, and the technology to detect AI-generated content is not very sophisticated. They need to have an open discussion about the technology and where it fits into the classroom.”

Questions are raised about the accuracy of AI detection software. According to USA Today, one student from University of California was accused of cheating by GPTzero and later found innocent in April. According to the Washington Post, TurnitIn, which claims 98% accuracy, misidentified half of 16 submitted samples as AI-generated or original work in an experiment.

Schmeidler says students should not worry about false cheating accusations, and that some professors may be open to its use. “I have a saying when it comes to plagiarism and giving credit sources: When in doubt, cite it out. Just cite your original source and you won’t need to worry about academic integrity and honor code violations.” said Schmeidler.

“Even better, check with professors first, before submitting work, to ask if it’s all right to use unattributed work or generative AI tools like ChatGPT. Students might be surprised how often the answer is yes, as this can be a fun discovery project with the professor. But assuming the answer is no, not asking, and then submitting work that is not your own is never a good idea!”

At the end of the day, ChatGPT itself was asked if it will one day take over academia and reflected the different policies of universities.

“As an AI language model, ChatGPT has the potential to assist students in their academic pursuits by providing them with writing suggestions, grammar corrections, and other language-related support.” said ChatGPT. “However, the idea that ChatGPT or any other AI tool will take over academia is not accurate. AI can never replace the critical thinking skills, creativity, and knowledge that are the hallmarks of a quality education. Moreover, many academic institutions have strict policies in place to prevent academic dishonesty, including the use of AI-generated content without proper attribution.”

“It is true that the use of AI in academia is increasing, but its role is mainly supportive rather than dominant. Instructors and professors will continue to be the primary sources of instruction, evaluation, and guidance for students, and the ethical use of AI tools will be subject to the rules and regulations of individual academic institutions.”

The Office of Academic Integrity did not respond to request for comment.