BY NINA MOTAZEDI, STAFF WRITER

A study conducted by Mason shows that wearable technology like Google Glass is more likely to be cognitively efficient than handheld smartphones.

Members of Mason’s Human Factors and Applied Cognition Department worked with researchers from Drexel University and the University of Pennsylvania to test whether augmented reality wearable displays, such as Google Glass, increase productivity and improve performance in everyday life.

The study, entitled “Into the Wild: Neuroergonomic Differentiation of Hand-Held and Augmented Reality Wearable Displays during Outdoor Navigation with Function Near Infrared Spectroscopy,” was published by Frontiers in Human Neuroscience in May. The experiment took place on Mason’s campus and was conducted by the late Professor Raja Parasuraman and then-Human Factors and Cognition graduate student Ryan McKendrick.

“This reason this study was groundbreaking was for a few reasons. One, because it’s using neuroimaging completely in the real world in an unconstrained situation, and it’s showing the way, that I believe, you’re supposed to do neuroergonomic research,” McKendrick said.

The field of neuroergonomics has the ability to improve technology in a way that is most effective for our brains and bodies. McKendrick said this study serves as an example of how he believes neuroergonomic research should be done to maximize brain and body benefits.

The study aimed to measure these benefits by researching Google Glass’ effect on people’s cognitive functions through increased situation awareness and reduced mental workload.

To better understand situation awareness, imagine you are driving on campus. While you are driving, you notice the trees, the road signs and all the people walking around. Your brain is processing all of the vital information in the environment and trying to make sense of it. As you approach the crosswalk, a family of ducks crosses the street. Your brain immediately perceives this change in setting and projects that you have x amount of seconds and x number of feet to brake. In essence, what your brain is doing is known as situation awareness, and it is especially critical for complex tasks. If you have a higher level of situation awareness, the likelihood of successfully performing the given task increases. Since situation awareness is linked to working memory capacity, wearable displays can reduce the amount of information needed to be stored, thus increasing your situation awareness. On the other hand, wearable displays can also cause cognitive tunneling; your brain might focus more attention on the actual display, reducing its capacity to attend to other tasks, resulting in decreased situation awareness.

The experiment sought to measure these concepts through responses to secondary tasks and measurements provided by a portable fNIRS (functional near infrared spectroscopy). fNIRS sends waves of light into the brain in order to indirectly gauge brain activity through changes in blood flow or hemodynamic changes. fNIRS were worn by all participants for the duration of the experiment.

Participants were split into two groups of 10, those using the Google Glass and those using the Apple iPhone 4. All 20 participants were 18 – 29 years old, right-handed and had normal vision. Both groups used Google Maps on their devices to navigate four different routes across Mason’s campus, totaling about 45 – 60 minutes. While navigating the route, participants were assigned two secondary tasks to measure their mental workload and situation awareness.

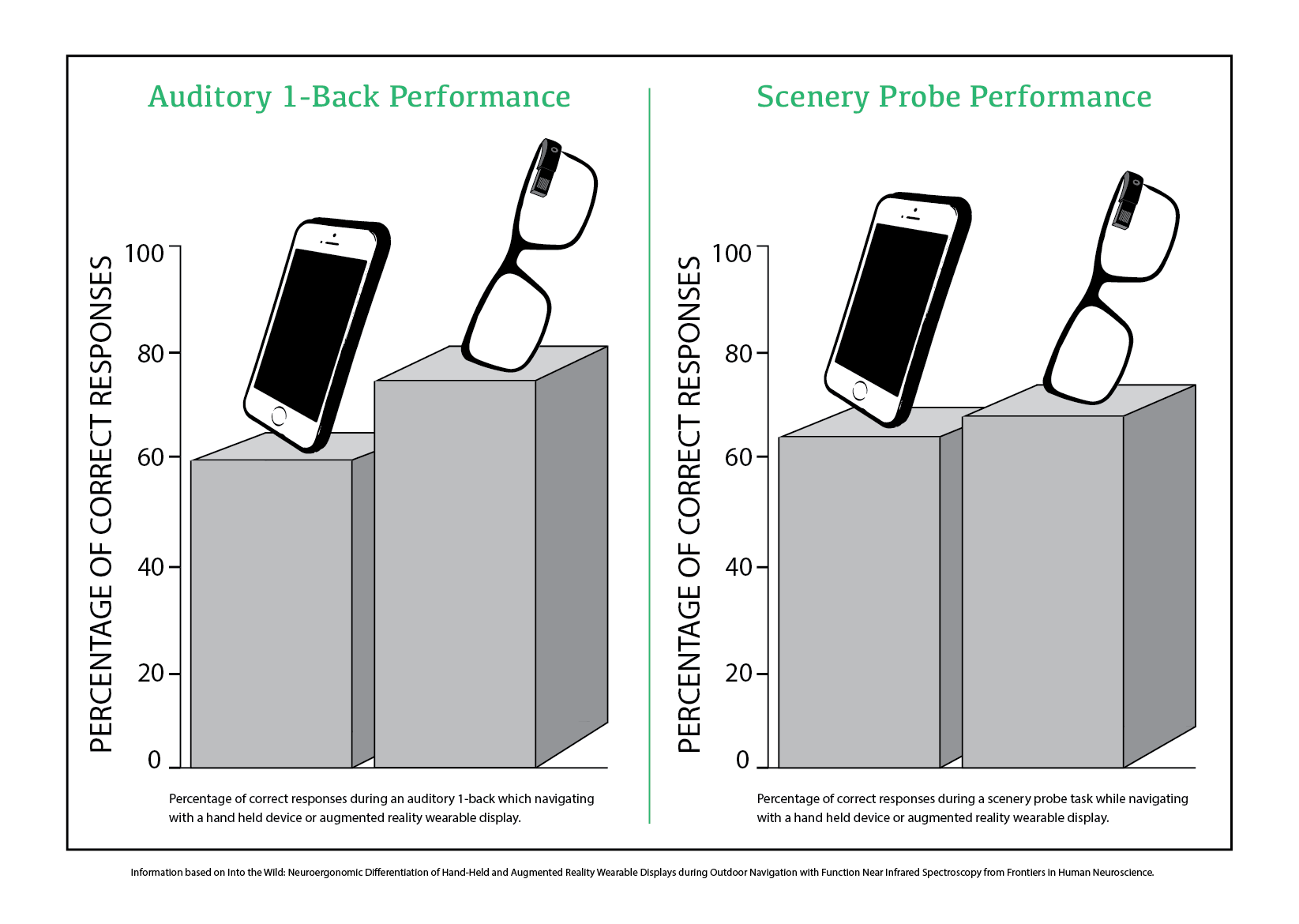

The first task asked participants to listen to a series of sounds and identify whether any two consecutive sounds matched. Responses to this auditory task were used to indirectly measure mental workload.

During the second task, participants were told to be aware of their surroundings and then, after 30 seconds, told to stop walking. The experimenter then proceeded to ask whether or not they had seen a specific object and participants responded yes or no. There were a total of 10 questions asked, six of which involved an object that was present in the environment and four of which involved an object that was not present.

The hemodynamic changes measured by fNIRS and the responses to the secondary tasks showed that participants using Google Glass during the auditory task had less interference and less brain activity than the smartphone users, resulting in, presumably, a lower mental workload.

The results showed no meaningful difference between the two groups during the scenery task; however, brain activity between the two groups varied when they made a mistake.

“What we did find is that when an error was made in the situation awareness task, the way that the user’s brain responds, whether it was in the glass group or the phone group, was different…if a Glass user made an error on the situation awareness task, the brain activity went up. If a phone user made an error their brain activity went down,” McKendrick said.

From these variations, the experimenters inferred that the reason phone users made an error was because they ceased to do the scenery probe and focused solely on navigating.

Google Glass participants, however, displayed increased brain activity when an error was made.

After hearing the results of the study, sophomore Andrew Nicholson is not surprised.

“Obviously it’s not the same as having it right in front of your eyes, but being able to see information that matters without all of the other distractions involved with picking up your phone makes a world of difference in efficiency and attention,” Nicholson said via email.

McKendrick believes the way to move both this field and technology forward is to combine the two.

“It’s all about testing technology. One of the problems with Google and a lot of these other tech companies is that they take what they call an agile approach…[This means] that we’re going to give you the minimal viable product, and the user is then going to be the test subject, effectively. At the same time, when they do determine what the minimal viable product is they don’t do any research at all on what’s going on in your brain,” McKendrick said.

By having these companies utilize neuroergonomic research, they will be able to provide products that are optimal for your brain and body, McKendrick said.

As for investing in wearable displays, it seems as though tech companies have additional economic and social problems to overcome.

“As far as glasses, I might be interested in the future, but the price point right now is daunting. I also think smart glasses are a little too Black Mirror. We don’t always need a screen in front of us,” Nicholson said.